News Story

New Lecture Series Unites Experts to Solve Critical Challenges

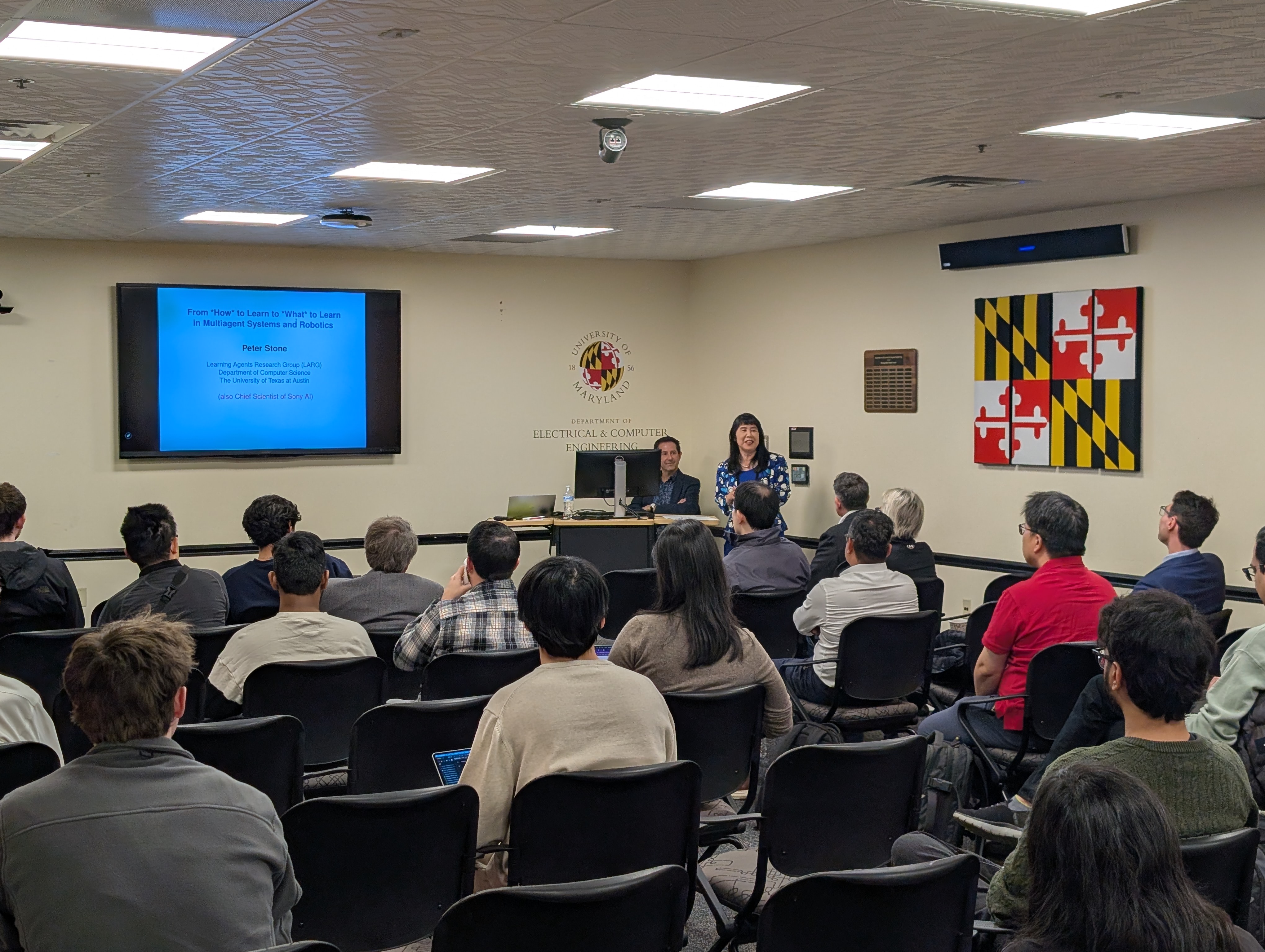

A new lecture series unites the University of Maryland (UMD) A. James Clark School of Engineering and the Clark School MATRIX Lab with defense leader HII to explore critical and emerging technology areas.

The “Engineering Futures Colloquium Series,” sponsored by HII’s Mission Technologies Division and hosted by the MATRIX Lab, aims to effectively translate research into impact by bringing together experts representing industry, government, and academia. Connecting this diverse cohort will ensure all voices in the innovation pipeline are heard, closing the gaps that prevent systems from being fielded and providing attendees with unique insights into the ecosystem of modern engineering.

The next presentation is May 7, 2026 with Dr. Joseph S. Jewell. Registration for that event is now open. The series successfully launched on April 9, 2026, with Dr. Peter Stone, a Professor of Computer Science at The University of Texas at Austin.

In the opening remarks of Dr. Stone’s presentation, John Bell, Chief Technology Officer for HII Mission Technologies, emphasized the importance of the series.

“When a leading research university and a mission-driven industry team come together with shared purpose, we strengthen not only our organizations but the next generation of innovators and the nation’s technology ecosystem,” Mr. Bell said. “Together, we can combine ideas, plan what’s next, and create solutions to the most critical challenges facing our world today.”

Teaching AI Agents What to Learn

Dr. Ming Lin, Distinguished University Professor in the UMD Department of Computer Science, coordinated Dr. Stone’s visit and introduced his lecture.

Dr. Ming Lin, Distinguished University Professor in the UMD Department of Computer Science, coordinated Dr. Stone’s visit and introduced his lecture.

Dr. Stone’s presentation emphasized that AI advancement is shifting focus from how agents learn to what they should learn. He shared that opportunities to identify what to learn present themselves when:

- tasks are too difficult or the environment is too big,

- future tasks are unknown,

- other agents are involved, and

- humans are reacting to the agent’s actions.

He shared several solutions to addressing these situations. When tasks are too difficult, researchers can use transfer learning to teach the agent something simpler, then work up to something more complicated, or task decomposition to break down a big task into smaller tasks and learn the easiest ones first. When the environment is too big, tasks must be prioritized.

Dr. Stone stressed the importance of learning skills in both simulated and real-world environments by including videos of robots playing soccer on a field and driving racecars on a virtual track; however, he pointed out that simulations often have reality gaps. He recommended the SLAC (Simulation-Pretrained Latent ACtion Space for Real-World RL) method for teaching robots complex tasks in the real world, which makes it easier and safer for robots to try something when future tasks are unknown.

When it comes to other agents being involved, Dr. Stone said the system needs to learn how to adapt to others by choosing the right partners to learn from. Teammates may behave unpredictably or poorly, and humans know to adapt to that, but AI does not. Dr. Stone suggests making learning ongoing and adaptive by pairing the agent with teammates that challenge it but still teach it.

Dr. Stone’s last point was that robots have the opportunity to learn when humans are reacting to their actions. Humans can provide feedback through reinforcement learning, which can be anything from judging behavior as good or bad to intervening to correct mistakes or show the desired behavior. Humans can also provide implicit feedback through facial expressions, body language, and emotions, but that requires mapping these responses and teaching the robot what’s positive and what’s negative.

Dr. Stone’s last point was that robots have the opportunity to learn when humans are reacting to their actions. Humans can provide feedback through reinforcement learning, which can be anything from judging behavior as good or bad to intervening to correct mistakes or show the desired behavior. Humans can also provide implicit feedback through facial expressions, body language, and emotions, but that requires mapping these responses and teaching the robot what’s positive and what’s negative.

This research adds a philosophical element to optimizing robot behavior. Considering agency, values, and alignment, both researchers and systems figure out what is worth learning and why. Dr. Stone’s work sets the stage for a future where AI systems can continuously adapt what they learn based on context, collaborators, and human feedback. This will enable more autonomous, intuitive, and human-aligned machines that operate effectively in complex real-world environments.

Published April 27, 2026